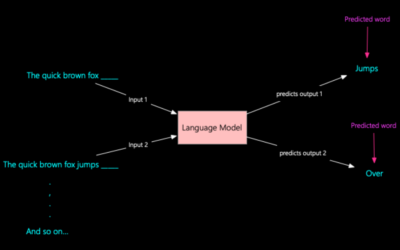

How a Large Language Model (LLM) predicts the next word

How a Large Language Model (LLM) predicts the next word, including all the mathematical operations involved at each step, with the appropriate vector and tensor manipulations.

Large Language Models (LLMs) such as GPT (Generative Pre-trained Transformer) are a class of deep learning models that have revolutionized natural language processing (NLP).

Graphics Processing Units (GPUs) have become the backbone of modern computing, powering everything from gaming to artificial intelligence (AI).

In modern computing, the seamless transfer of data between various hardware components is crucial for maintaining system performance and efficiency.

Cryptocurrency has taken the world by storm, evolving from a niche concept into a mainstream financial asset class.

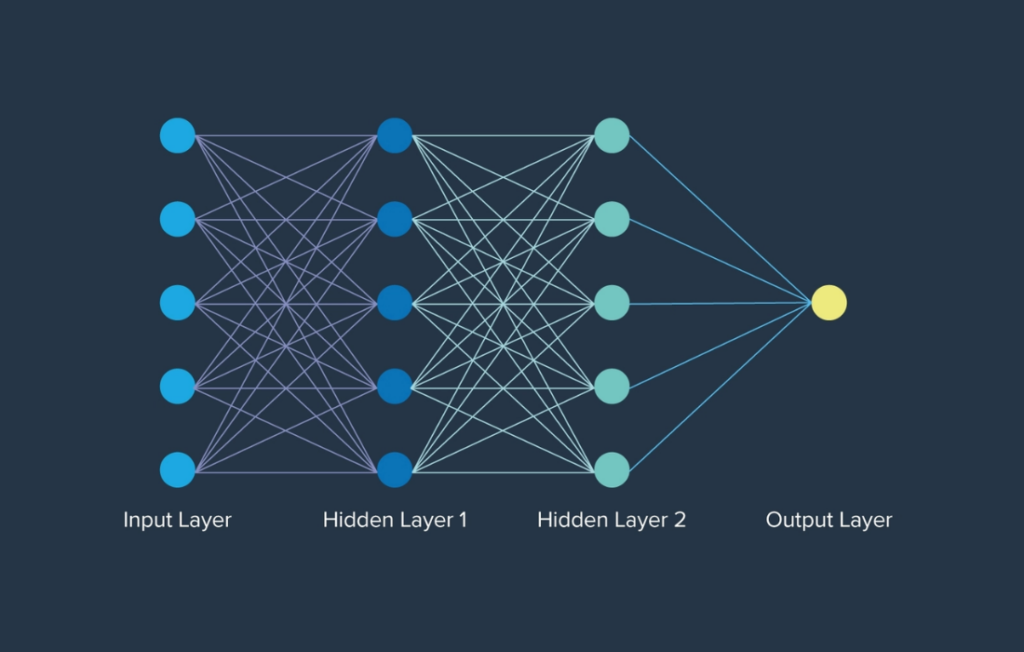

Let’s break down AI, Machine Learning (ML), and Neural Networks in a structured way, covering key concepts, types of ML, and model architectures like Transformers, and their applications.

Machine Learning

is a vast and intricate field that requires an understanding of key concepts from mathematics, statistics, programming, and data science. Let’s go through everything step-by-step, from the fundamental maths to the essential skills required to build ML models.

A prompt injection attack is a GenAI security threat where an attacker deliberately crafts and inputs deceptive text into a large language model (LLM) to manipulate its outputs. This type of attack exploits the model’s response generation process to achieve unauthorized actions, such as extracting confidential information, injecting false content, or disrupting the model’s intended…

Who Will Shape the Future of Artificial Intelligence? Artificial Intelligence (AI) has become the central battleground for the world’s most powerful tech leaders. Among them, two figures stand out: Mark Zuckerberg, the founder and CEO of Meta (formerly Facebook), and Sam Altman, the CEO of OpenAI. Both men are driving innovation at an unprecedented pace,…

Lecture : The Language of Tensors & Foundational Operations 1. Motivation: Why We Need This Language The Data Complexity Problem: In the early days of machine learning, data was often tabular: n_samples x n_features. A vector (sample) or a matrix (whole dataset) sufficed. Modern problems are not so simple. An Image: A 224×224 color photo…

In 2017, cybercriminals pulled off one of the most unusual heists in history-they hacked a Las Vegas casino through its internet-connected fish tank. While the casino had invested in top-tier security for its vaults and payment systems, they overlooked a critical vulnerability: a smart aquarium in the lobby. How the Hack Happened The Weak Link – The…

In the golden age of modems and bulky CRT monitors, Kevin Mitnick emerged as a nearly mythical figure. In the early 1980s, he wasn’t crafting viruses—he was crafting lies, charm, cloned phones, and fake IDs. At just twelve, he bypassed LA bus punch-card systems to ride for free. Soon after, he dove into phone phreaking…

Looking for the best Neural Network courses in 2025? You’re in the right place. Below is a hand-picked list of 15 high-quality courses covering fundamentals to advanced topics-PyTorch, TensorFlow, CNNs, RNNs, transformers, and more. I’ve also added useful reads from KNCMAP after each pick so you can reinforce concepts with our own tutorials. New to neural nets? Start with…

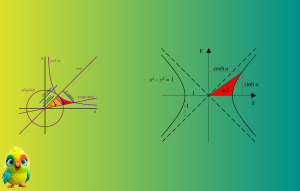

1. Introduction to Hyperbolic Functions Hyperbolic functions are analogs of trigonometric functions but are defined using exponential functions rather than the unit circle. They appear in various areas of mathematics, physics, and engineering, including: – Calculus (integration, differentiation, and differential equations) – Physics (relativity, heat transfer, and wave mechanics) – Engineering (catenary structures, transmission lines)…

In the fast-evolving world of artificial intelligence, rumors spread faster than code compiles. One of the latest headlines making waves suggests that Perplexity AI has made a $34.5 billion bid to acquire Google Chrome — the world’s most widely used web browser. But is this a sign of the next big AI revolution, or just…

Transformer is a neural network architecture that has fundamentally changed the approach to Artificial Intelligence. Transformer was first introduced in the seminal paper “Attention is All You Need” in 2017 and has since become the go-to architecture for deep learning models, powering text-generative models like OpenAI’s GPT, Meta’s Llama, and Google’s Gemini. Beyond text, Transformer is also applied in audio generation, image…

Introduction to Shor’s Algorithm Shor’s Algorithm, developed by Peter Shor in 1994, is a quantum algorithm that efficiently factors large integers—a problem believed to be intractable for classical computers. This has profound implications for cryptography, particularly RSA encryption, which relies on the hardness of integer factorization. Why Shor’s Algorithm Matters – Exponential speedup over classical…